Deploy AI That Works in Production, Not Just in Demos

Use governed data, robust models, and clear guardrails to automate real work with measurable ROI.

Why Most AI and ML Initiatives Stall or Quietly Die

Most AI projects do not fail on models. They fail on architecture and risk.

Data Scatter

Data is scattered across apps, warehouses, and SaaS tools. Models train on exports, not governed data. Risk increases.

Isolated Pilots

Agents and chatbots run as isolated pilots. They never integrate with systems of record or workflows. ROI stays theoretical.

Trust Gap

LLMs hallucinate because prompts replace retrieval, grounding, and evaluation. Trust collapses in front of leadership.

No Monitoring

No monitoring, drift tracking, or access control exists. A single failure can trigger reputational and compliance damage.

No Owner

There is no owner for AI infrastructure. Experiments pile up. Nothing becomes a stable capability.

The Result

Spend without durable impact, rising risk, and leadership skepticism of “the AI initiative.”

Business Case — AI as a Controlled Operating Layer

Production-grade AI and ML change unit economics when engineered correctly:

Core Capabilities

AI Agent Frameworks

Design task-specific agents that combine tools, retrieval, and workflow logic. We configure policies for safe execution against internal APIs.

- Multi-step reasoning & planning

- Tool calling & Orchestration

- State & Memory Management

Advanced RAG Engineering

Index internal documents into vector stores. We implement hybrid retrieval pipelines (BM25 + Vector) to ground LLM outputs on real data.

- Citation & Grounding

- Hybrid Search Algorithms

- Fine-tuning & Adaptation

Conversational AI

Deploy domain-tuned ASR and TTS models. Integrate voicebots into telephony and CRM systems to handle real-world interactions.

- Custom Accent Tuning

- Omnichannel Deployment

- Real-time Latency Optimization

Our Tech Stack

The Squad That Owns AI

Named roles with accountability for performance, reliability, and risk.

Architects

End-to-end system design.

ML Engineers

Pipelines & Optimization.

Data Engineers

Readiness & Quality.

Scientists

Tuning & Metrics.

Product Leads

Value & Logic.

Problem → Engineering Fix → Impact

Knowledge Support

Sales Intelligence

Compliance Review

The “No Black Box” Promise

Evaluation Harnesses

Define quality metrics (accuracy, relevance, safety) and benchmark LLM outputs.

Grounding & Citations

Require models to reference retrieved sources so users can verify claims.

Policy & Safety Layers

Implement content filters, allow/deny lists, and domain-specific constraints.

Logging & Observability

Log prompts, responses, and tool calls with user and context metadata.

Access Control

Restrict who can use which agents and data scopes, with audit trails.

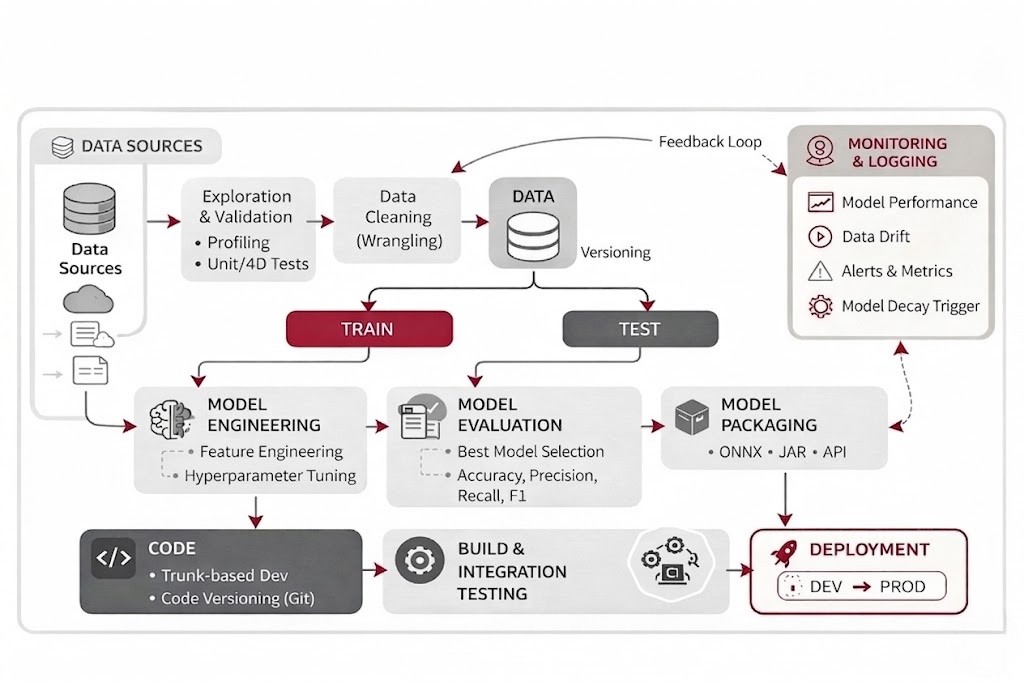

Model Lifecycle

Track versions, drift, retraining events, and deprecation.

Maturity Evolution

Audit and Frame

- Assess experiments & data readiness.

- Identify high-value use cases.

Architect and Build

- Design on data platforms.

- Implement RAG/Agents with monitoring.

Scale and Govern

- Extend shared components.

- Move to “platform” status.