Engineer LLMs as Part of Your Core Stack

Design prompts, policies, and integrations so LLMs work predictably across products and teams.

When “LLM” Means a Prompt, Not a System

Most LLM projects stall before they touch real workflows.

Prompts run on exported data, not governed warehouses. Risk and compliance block production use.

Teams build chat interfaces without tool access. No tickets move, no records update, no cost actually drops.

Outputs are not evaluated. No test sets, no benchmarks, no monitoring. Quality depends on “it looks right.”

Latency and cost spike as usage grows. Finance pushes back before value is proven.

No owner exists for LLM infrastructure. Each team runs its own isolated experiment.

Result: visible spend, weak impact, and growing skepticism about AI from boards and executives.

Rudder Solution: Treat LLMs as engineered components in a governed architecture, not as stand-alone tricks.

Business Case — LLM Engineering as a P&L Lever

Done correctly, LLM systems change your economics.

Time

Compress research, drafting, and analysis work from hours to minutes, across many roles.

Cost

Reduce manual reading, classification, and summarization in support, ops, and compliance teams.

Revenue

Improve response quality and personalization in sales, marketing, and service interactions.

Risk

Lower error and policy-breach risk through grounding, constraints, and evaluation.

Leaving LLMs as isolated pilots keeps the organization manual and exposes it to uncontrolled AI behaviour.

Core Technical Capabilities

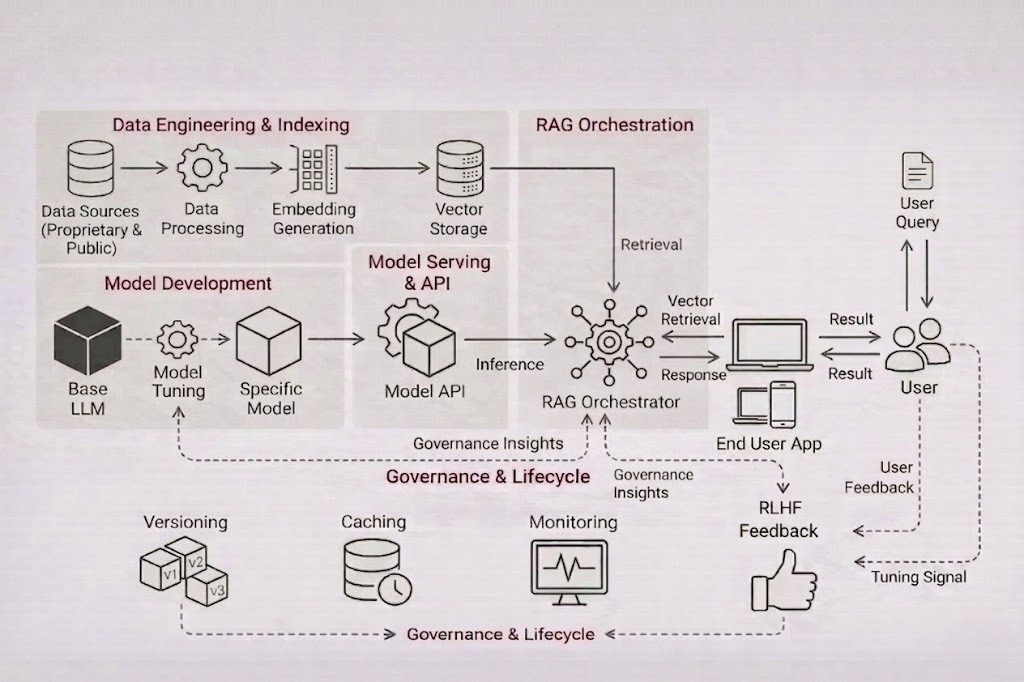

A comprehensive engineering framework designed to move LLMs from prototype to production reliably.

Model and Architecture Strategy

Select the right model and deployment pattern for your constraints. Compare commercial APIs and open-weight models by latency, cost, and compliance profile.

Lower model spend per use case and fewer re-platform cycles.

Prompt, System, and Tool Design

Move from ad-hoc prompts to structured, testable instructions. Define system prompts that encode policy, tone, and boundaries.

More stable outputs and higher task completion rates.

Fine-Tuning, Adaptation, and Control

Adapt models when generic behavior is not enough. Use fine-tuning, LoRA, or adapters only where cost and risk justify it.

Higher accuracy on domain tasks, reducing rework.

Integration With Your Data and Systems

Connect to warehouses, RAG systems, search, and APIs under strict scopes. Enforce data contracts and masking for sensitive fields.

Real process steps complete faster and cheaper.

Evaluation, Monitoring, and Cost Control

Engineer LLMs with the same rigor as any core system. Track correctness, safety, latency, and cost per call by use case.

Predictable operating cost and reduced risk.

Our Tech Stack

The Squad Behind LLM Engineering

Projects are run by a cross-functional team.

No “prompt-only” projects. Teams are structured to deliver stable systems that survive usage at scale.

Example LLM Engineering Use Cases

Support & Ops

Teams read long SOPs, contracts, or tickets before acting. Cycle times and cost are high.

LLM system integrated with RAG and ticketing, generating answers and next-step options with citations.

Reduced handling time, faster resolution, and lower support cost per case.

Sales Prep

Reps compile context from CRM, email, and usage data manually. Prep time limits coverage.

LLM service that assembles account briefs, risk flags, and talk tracks from governed data.

Shorter prep time, more meetings per rep, and higher revenue per headcount.

Compliance

Staff misread policies and regulations. Compliance teams re-explain rules repeatedly.

LLM configured with policy-focused prompts, RAG, and strict guardrails, producing cited answers.

Fewer missteps, lower compliance risk, and reduced time spent answering repeated questions.

Quality, Governance, and “No Black Box” Behavior

LLMs must be explainable, not magical.

Domain-specific test sets with graded metrics, not just ad-hoc checks.

Use retrieval and system constraints to keep outputs aligned with verified data.

Enforce IAM and data classification across prompts and context.

Capture prompts, context, responses, and decisions for audits and incident reviews.

Version models, prompts, and policies; roll back safely when needed.

Keep spec sheets for each LLM endpoint: purpose, inputs, outputs, and risks.

Maturity Path — From Single Use Case to LLM Platform

Assess and Frame

- • Audit current AI experiments, data readiness, and risk constraints.

- • Select use cases with clear value in revenue, cost, risk, or time.

Engineer and Deploy

- • Design architecture, prompts, and retrieval for prioritized use cases.

- • Implement serving, evaluation, and monitoring.

- • Launch with controlled user groups and clear success metrics.

Scale and Govern

- • Generalize components into a shared LLM platform.

- • Add new use cases through defined onboarding patterns.

- • Optimize cost, latency, and reliability as usage expands.

Each phase increases business impact while keeping risk and spend under control.