Build Data Infrastructure That Can Survive Real Use

Engineer pipelines, warehouses, and models that keep analytics and AI reliable at scale.

Why Most Data Engineering Initiatives Become Expensive Sandboxes

Tools are bought before a data model exists.

Complexity rises, decision speed does not.

Each team builds its own pipelines.

Metrics drift. Reporting disputes never stop.

Key jobs rely on fragile scripts and manual runs.

Outages appear at month-end.

AI and ML efforts sit on poor data.

Models look impressive but never enter production.

No one owns lineage, testing, or documentation.

Risk grows quietly with every change.

The Business Case: Data Engineering as Cost, Risk, and Time Control

A disciplined data engineering layer changes unit economics. Inaction keeps headcount tied up in low-value work.

Reduce reporting effort

Automated pipelines and models replace manual pulls and merges. Finance and ops reclaim working hours.

Cut decision latency

Data lands ready for analysis within agreed SLAs. Leadership moves in days, not weeks.

Lower incident risk

Tests, monitoring, and lineage reduce bad-data surprises in board packs and regulatory reports.

Control platform cost

Architecture and models are designed for SME realities, avoiding unnecessary tools and bloat.

Make AI viable.

Clean, modeled data makes AI and ML predictable, not a permanent experiment. Inaction keeps headcount tied up in low-value work and leaves key decisions exposed to silent data risk.

From Raw Ingestion to

Query-Ready Gold Tables

Data Warehousing:

Architected for SME Scale

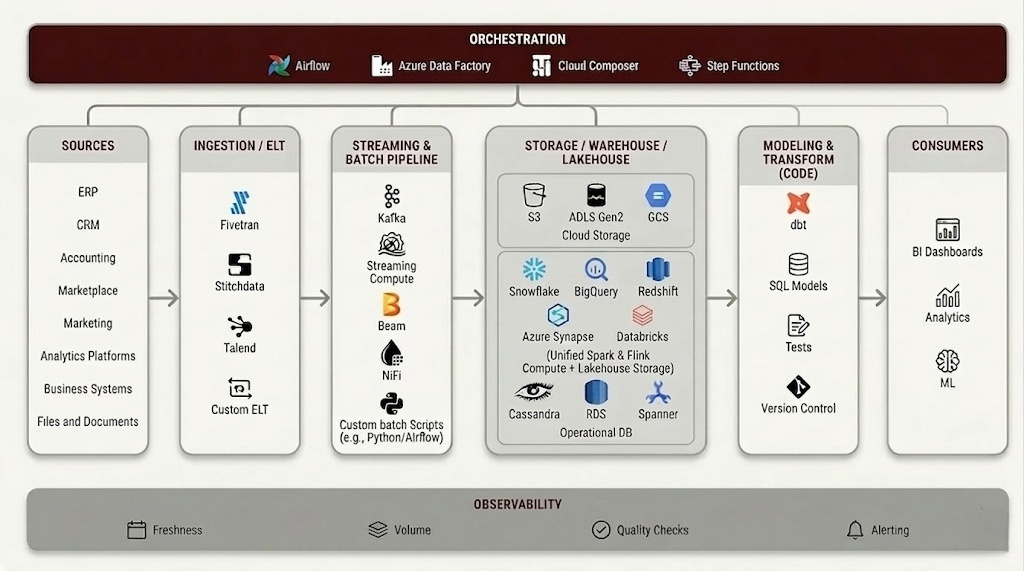

Design cloud data warehouses or lakehouses with clear raw, staging, and curated layers.

Implement dimensional or data vault models tuned to your business domains.

Optimize storage and compute patterns to control spend as data volume grows.

Data Pipelines:

Reliable Flow from Source to Warehouse

Build ELT/ETL pipelines from ERP, CRM, product, web, and third-party systems.

Handle schema changes, late data, and deduplication with engineered rules.

Orchestrate jobs with dependency management, retries, and alerting.

Data Analysis Enablement:

Models That BI and AI Can Trust

Create semantic layers and “gold” tables for finance, sales, operations, and risk.

Standardize KPI definitions for revenue, margin, churn, and operational metrics.

Serve feature-ready datasets for downstream ML and analytics workloads.

Our Tech Stack

Who Designs and Operates Your Data Platform

Data Architects

Define warehouse structure, integration patterns, and governance controls.

Data Engineers

Build pipelines, transformations, and reliability tooling.

Analytics Engineers

Design semantic models and KPI definitions.

Domain Specialists

Align models with real commercial and operational processes.

Where Data Engineering Starts Paying Back Immediately

Finance and Leadership Reporting Without Rebuilds

Use Case 1Month-end reporting requires multiple exports, manual joins, and late-night fixes.

Central warehouse with governed pipelines from ERP, CRM, and banks; finance “gold” tables.

Reporting time drops sharply. Error risk reduces. Leadership reviews move earlier in the month.

Ecommerce or SaaS Revenue and Funnel Clarity

Use Case 2Marketing, product, and finance show different numbers for revenue and funnel performance.

Unified event and transaction model across web, app, billing, and CRM.

One version of MRR, churn, and funnel KPIs. Growth bets become testable and defendable.

Supply Chain and Operations Visibility

Use Case 3Inventory, demand, and logistics data sit in separate systems. Decisions depend on offline spreadsheets.

Pipelines from ERP, WMS, TMS, and planning tools into a single supply chain model.

Faster S&OP cycles, reduced manual effort, and fewer expensive “surprise” stock or freight issues.

Data That Can Be Audited, Not Just Queried

Quality and governance are treated as core features. Business effect: Fewer data incidents, easier audits, and higher confidence in decisions made from the platform.

-

Testing Schema, null, range, and business-rule tests on critical tables and metrics.

-

Lineage End-to-end visibility from report or model back to source systems.

-

Documentation Clear definitions for datasets, fields, and KPIs, maintained with the code.

-

Change Management Version control, code review, and release processes for transformations and pipelines.

-

Handover Playbooks and runbooks ensure internal teams can operate and extend the platform.

From Chaos to Governed Platform: Maturity Evolution

Stabilize and Audit

Map current data flows, pipelines, and “shadow” processes in spreadsheets. Identify critical reports and decision points at risk from bad or late data. Stabilize key jobs and plug immediate reliability gaps.

Build and Deploy a Usable Platform

Design the warehouse model and semantic layers aligned to business domains. Implement core pipelines and transformations with testing and monitoring. Roll out high-value datasets and dashboards that replace manual effort.

Scale, Optimize, and Enable AI

Extend coverage to new systems, teams, and use cases. Optimize compute, storage, and workloads for cost and speed. Provide robust feature stores and curated data for AI and ML initiatives.

Each phase is designed to improve revenue visibility, lower operating and cloud cost, reduce data risk, and compress time-to-decision.